The Cognitive Campus Center Journey

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

3 min read

/Images%20(Marketing%20Only)/Blog/Jayshree_Ullal_024.png) Jayshree Ullal

:

Aug 12, 2014 12:00:13 AM

Jayshree Ullal

:

Aug 12, 2014 12:00:13 AM

Leading customers and researchers in cloud and data center networking have been promoting the importance of understanding the impact of TCP/IP flow and congestion control, speed mismatch and adequate buffering for many decades. The problem space has not changed during this time, but the increase in the rates of speed by 100X and in storage capacity by 1000X have aggravated the problem of reliable performance under load for data intensive content and for storage applications, in particular. One Arista fan summed it up best by saying:

“Basically the numbers have changed by order of magnitude, but the problem is the same!”

Poor performance and inadequate buffering in a demanding network is a painful reminder that buffering, flow control, and congestion management must be properly designed. TCP/IP was not inherently built for rate-fairness, and packets are intentionally dropped (yes, only window fairness is possible). Yet the effect of these drops can be multiplicative given major speed mismatches of 10-100X inside the data center. In the past, QoS and rate metering were adequate. However, at multi-gigabit and terabit speeds and particularly as more storage moves from Fiber Channel (with buffer credits) to Ethernet, packet loss gets more acute.

The primary need for dynamic queuing and buffering mechanisms is to prevent packet loss and data drops in mission critical applications. This is important for bursty storage applications that rely on the network to fairly deliver consistent performance across a scale-out storage cluster.

A second benefit of properly sized buffers is the ability to schedule traffic with different priority classes (such as real-time, data and storage). This requires enough buffers to hold the traffic that needs to be scheduled.

Varied traffic patterns and types of data in the data center drive buffer requirements. In 10/40/100GbE applications, while one does not always need multiple gigabytes of buffer per 10GbE port, lower buffer capacity of 100KB-2MB per port is typically not sufficient and can be detrimental in any size of network. In many cases only the spine needs significant buffer to prevent loss of traffic under congestion. In other cases there may be more demand for buffer at the leaf – depending on the inherent over-subscription ratios designed into the network. The combination of the new 7280E leaf (9GB of buffer/system) or 7050X (12MB of buffer/system) and 7500E Spine (100MB+ of buffer/port, 144GB/system) provides a world-class implementation for some of the largest, most mission-critical storage network architectures in the world. Unique hardware powered by EOS software capabilities enables Arista to deliver a true universal cloud network – capable of supporting multiple workloads with different priorities and traffic patterns concurrently.

This leads to best practice network architectures for demanding workloads combined with the power of EOS - Arista’s programmable Extensible Operating System. Arista EOS and its uniquely integrated feature sets specifically focused on Hadoop/HDFS including MapReduce Tracer, RAIL, DANZ, LANZ, and Zero Touch Provisioning (ZTP).

Key questions to ask to achieve that balanced optimal design are:

“What is your speed mismatch of 1/10/40/100Gbe?”

“What are your peak load and query rates?”

“How much (minimum and maximum) load do I place on storage clusters?”

“How much visibility do we have into these storage/server workloads?”

“How much data is each job/task sending over the network?”

“Do the traffic patterns in the storage/server workloads lend themselves to incast situations?”

“How can I find and troubleshoot a worrisome node behind a particular interface?”

“How can I identify hot spots in my network before I experience packet loss so that I can be proactive and not reactive?”

As traditional storage networks migrate to software defined storage architectures, the need for a universally capable network infrastructure becomes more and more relevant as the location of the data the application references itself becomes mobile and distributed across the network. The power of this distributed architecture is directly proportional to the network infrastructure that these nodes communicate over, synchronizing CPU, disk/flash read/write, and network I/O performance. Organizations therefore have realized that the key to unlocking highly effective, optimally performing storage investments is a network designed for massive scale, efficient operational manageability, and stability. Balanced network distribution requires not only larger buffers but also smart buffer allocation and intelligent tracking of workload resources.

It is indeed an exciting time in modern networking with cloud economics possible in mainstream enterprises. I wish my Arista readers and well-wishers a happy summer of 2014 as we continue to distinguish marketing from real world use-cases in Software Driven Cloud Networking.

As always, I welcome your comments at feedback@arista.com.

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

/Images%20(Marketing%20Only)/Blog/Many-Facets-AI-Blog-Image.jpg)

As computational resources scale to meet the demands of large generative artificial intelligence (AI) models, networking plays a crucial role in...

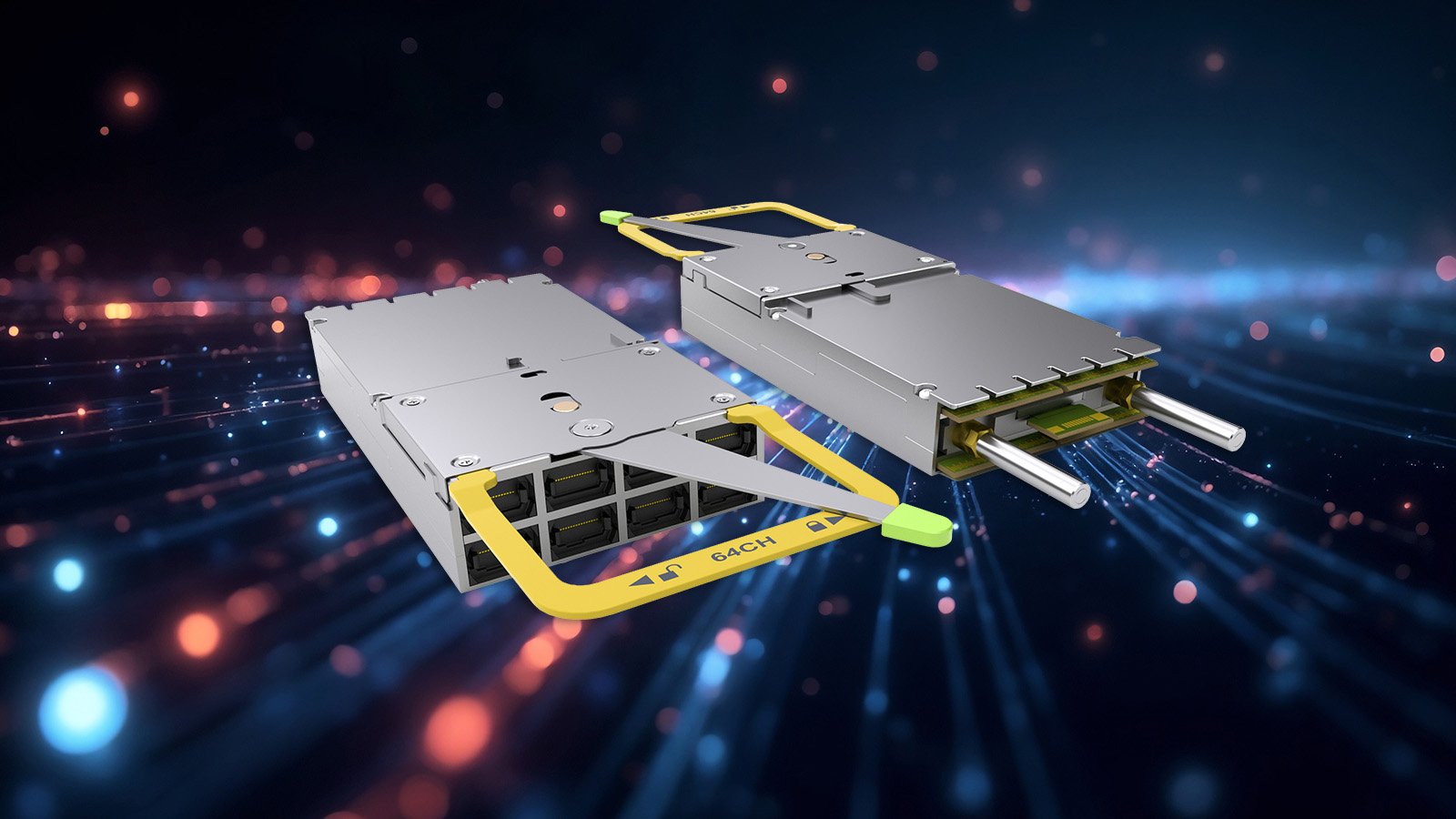

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...