AI Datacenters are Reshaping the Optics Industry

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...

2 min read

/Images%20(Marketing%20Only)/Blog/Jayshree_Ullal_024.png) Jayshree Ullal

:

Apr 13, 2009 12:06:56 AM

Jayshree Ullal

:

Apr 13, 2009 12:06:56 AM

As we enter this new era of dense servers and multi-core cloud computing, one must pay closer attention to the choice of the right cloud networking technology for seamless compute, network and storage access. I find myself in the midst of "Ethernot vs. Ethernet" choices. Three candidates present themselves as viable options: 10 Gigabit Ethernet, Fibre Channel over Ethernet (FCoE), and InfiniBand. All three technologies boast of high speed at 10 Gbit/s or higher, and promise to virtualize I/O by having other network protocols run inside their fat pipes. Each one comes with their nuances. Let's quickly review the choices.

10 Gigabit Ethernet is clearly the most widespread and standards-based technology. It is a natural progression from 1 Gigabit Ethernet for servers and storage access, and relies on widely deployed Layer 2 and Layer 3 protocols. 10GbE provides cost effective server connectivity with low and predictable latency of a few microseconds with adequate buffering. Non-Blocking Cloud Networking topologies can be constructed with active uplinks multipathed (at L2 or L3) in a two-tier construct spanning 100-1000+ nodes. Seamless storage access is possible through iSCSI-based block storage or network attached file storage using 10GbE networking. Standards based products such as Arista's 71XX family are only 2-3 times the price of 1GbE, with prices as low as $500/switch port. Smooth transitions and familiar operations are encouraged by imminent 10GbE LAN-on-motherboard server designs and the emergence of 10GBASE-T, which allows 10GbE to run distances of 30-100 meters over existing Category 5 or new Cat 6 cabling.

While iSCSI and File Storage can run on tried and true 10GbE, the SAN Fibre Channel world awaits Fibre Channel over Ethernet (FCoE) only if convergence of SAN and Ethernet is desired. Taking Fibre Channel protocols and layering them on Ethernet requires special changes to standard Ethernet, such as priority flow control (PFC). Existing Ethernet is inherently defined for graceful drop of packets, which will break Fibre Channel links, so servers require special NICs with FCoE support in order to link to this emerging infrastructure. Standards for FCoE and and associated PFC are being finalized in 2009 by the IEEE Data Center Bridging (DCB) group, and standards-compliant interoperable products are expected in the 2010 timeframe. Deployment of new storage protocols like FCoE will take time. The convergence of storage protocols such as ISCSI (and future FCoE) can be viewed as an optional overlay upon 10GbE transport, rather than a separate flavor.

InfiniBand (IB) is already deployed in large high-end HPC clusters. InfiniBand requires special host adapters and switches, and often a significant number of gateways to connect the IB island to the Ethernet galaxy. It has been installed at 8 Gbit/s and double data rates of 16 Gbit/s, and is now transitioning to quad data rates of 32 Gbit/s. InfiniBand boasts of the lowest latencies, required in ultra high performance compute clusters, and will remain so in the HPC niche. It is important to note that InfiniBand is a good server-interconnect, but it does not provide the complete flow control for scalable networks, needs gateways, and requires a dedicated operations team. In my long career in networking, I have learned not to bet against Ethernet. Despite having been in the midst of logical reasons on the deterministic virtues of Tokens with 100 Mbps FDDI, or 53 byte cells of ATM, these technologies become a transient and niche chapter in history. The mainstream choice and winner is obvious. Yes, it is 10 Gigabit Ethernet. FCoE may become an overlay option over time when standards and interoperability mature. The introduction of 10GBASE-T combined with higher speed evolutions to 40 gigabit and 100 gigabit Ethernet, make 10GbE migrations more compelling. Welcome to the new, yet familiar world of 10GbE based cloud networking!

Your feedback is welcome at feedback@arista.com

References:

Technology Partner Solutions

IBRIX Cloud Storage

The Widespread role of 10GigE in Storage

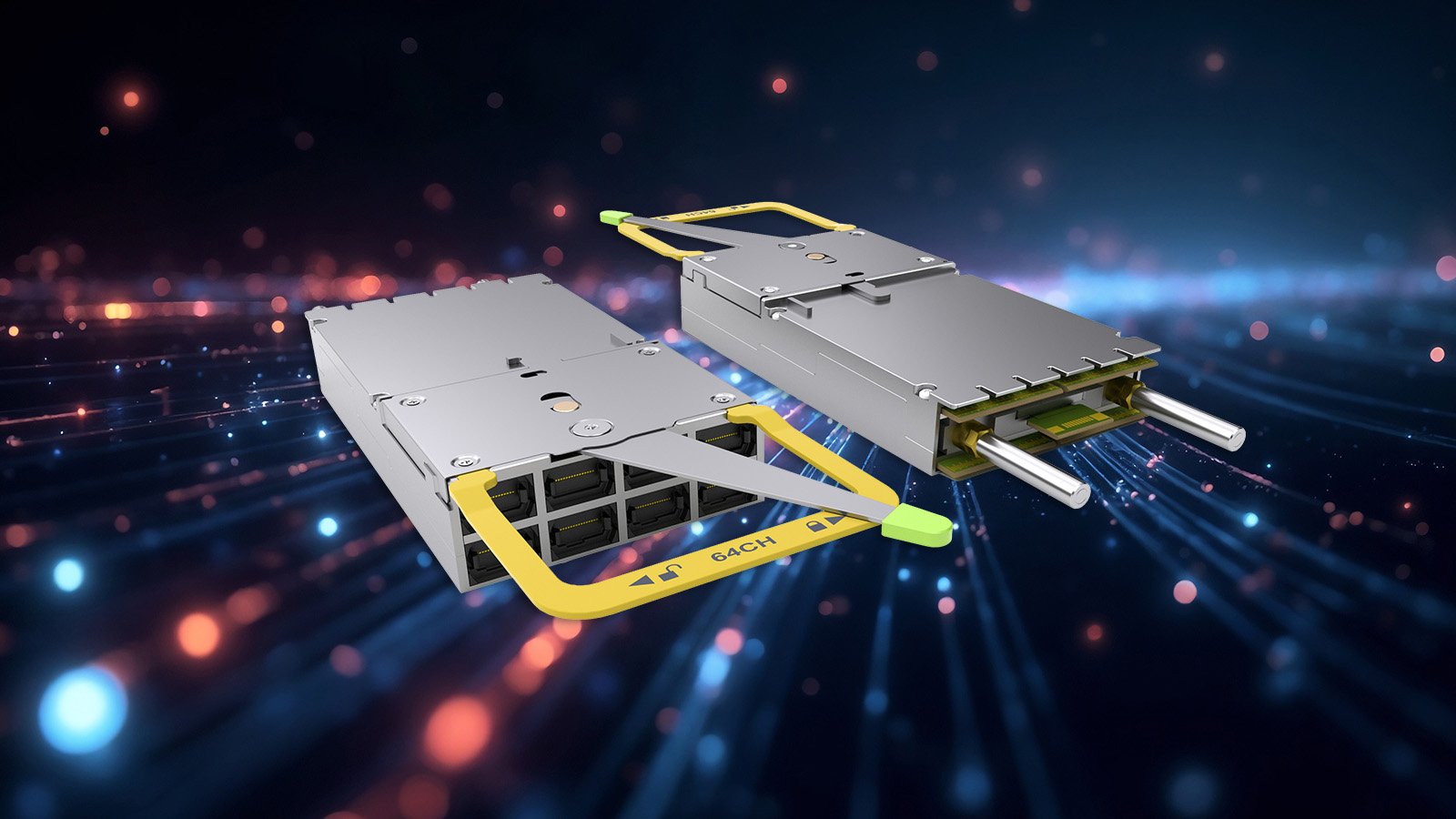

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...

Leaf-spine architectures have been widely deployed in the cloud, a model pioneered and popularized by Arista since 2008. Arista’s flagship 7800...

The explosive growth of generative AI and the demands of massive-scale cloud architectures have fundamentally redefined data center networking...