The Cognitive Campus Center Journey

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

2 min read

/Images%20(Marketing%20Only)/Blog/Jayshree_Ullal_024.png) Jayshree Ullal

:

Jul 14, 2009 3:30:33 AM

Jayshree Ullal

:

Jul 14, 2009 3:30:33 AM

One of Om Malik's recent blogs I read in Gigaom was thought provoking (as usual!). It made me realize that we have reached a tipping point with the introduction and deployment of powerful multi-core processors. They have begun to challenge the foundation of today's anemic network connectivity. A network running at 1 gigabit is becoming the weak link in the data center. The adoption of just about every web scale application be it File Systems protocols, Map-Reduce frameworks with Hadoop clusters or Mem-cached's giant hash tables, are placing far greater demands on scalability. We are no more limited by compute power as dense cloud computing enables processing speeds of 1.6 peta-flops, which even out paces Moore's law. Compute capacity could vastly outstrip network bandwidth by 2:1 if we are not prepared. In fact, we are more network-bound by Nielsen's Law.

Consider a simple rule of thumb to guide compute-network ratios.

1 gigahertz of CPU = 1 gigabit per I/O.

This means a single core 2.5 gigahertz X86 CPU already requires 2.5 gigabits of networking, and a quad-core needs four times as much, that is 10 gigabit networking. In Arista's customer applications we have seen peak traffic loads of 10Gbps of traffic over Ethernet through a single Xeon 5500 based server. The intensity of workloads and compute utilization can vary from large data transfers to clusters, and file storage. What is clear is that networking throughput is no more as compute-bound, but limited by the fine-tuning of the stack and the achievable network performance. High Performance Compute (HPC) workloads are likely to peak more than 10Gbps per server especially when you couple it with storage traffic on the same network.

The exponential effect of virtualized servers creates thousands of virtual machines running around in Brownian motion saturating the network I/O. In a typical virtualization deployment, it is quite common to see servers running VMware’s ESX4.0 and deploying an average of six 1-Gigabit Ethernet NICS (Network Interface Cards) to cope with the multiplicity of servers. Another test came close to saturating even a 10GbE link between a pair of octal core Xeon 5500 machines each running four Xen VMS and NICS with I/O Virtualization features. By replacing multiple 1Gigabit with a single 10Gbps Ethernet pipe (NIC and Switch capable of virtual portioning) one can simplify, save costs and increase throughput in real production environments. More on this virtualization topic in my next blogs.

As virtualized servers push the limits and drive 10Gbps of Ethernet traffic in access or "leaf" of the network, we must pay equal attention to the ripple effect in the core backbone or "spine" network (Refer to my Jan Blog). Take a configuration with racks of 40 servers each, putting out a nominal 6-9 Gbps rate of traffic. Each rack will have a flow of 240-360 Gbps and a row of 10-40 racks will need to handle 2 Tbps to 10+ Tbps. Especially when flows between servers are in close proximity to each other, two - tiered non-blocking leaf-spine Arista 71XX based-architectures are optimal.

As multi-cores and virtualization are deployed widely, a new scale of bandwidth is needed for 10GE/40GE and eventually 100GE. Otherwise the network becomes the limiting factor for application and cloud-based performance and delivery. This translates to multiple 1000-10,000 node clusters and terabits of scale! Welcome to the new era of cloud networking! Your thoughts are always welcome at feedback@arista.com

References:

Nielsen's Law

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

/Images%20(Marketing%20Only)/Blog/Many-Facets-AI-Blog-Image.jpg)

As computational resources scale to meet the demands of large generative artificial intelligence (AI) models, networking plays a crucial role in...

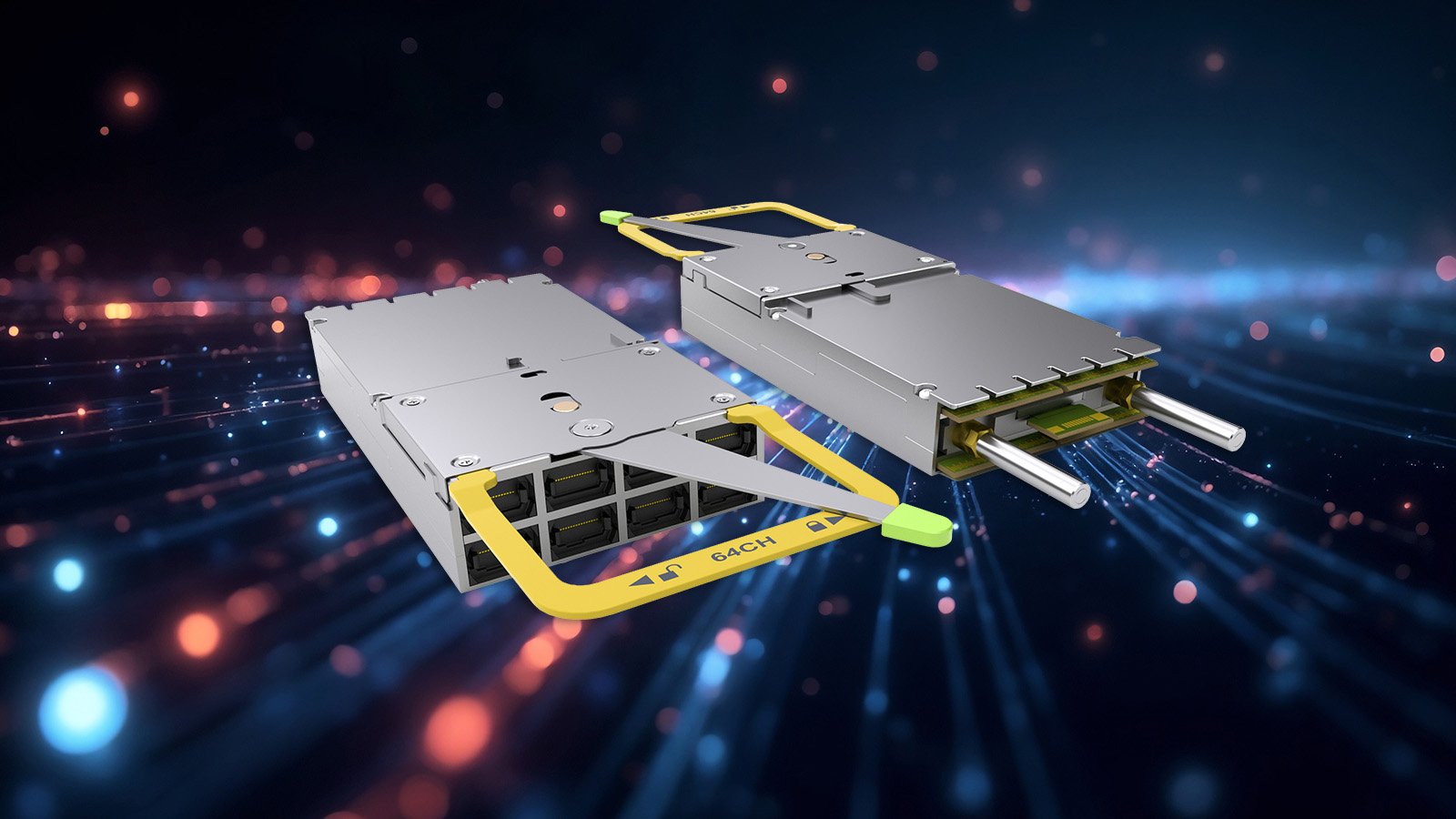

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...