AI Datacenters are Reshaping the Optics Industry

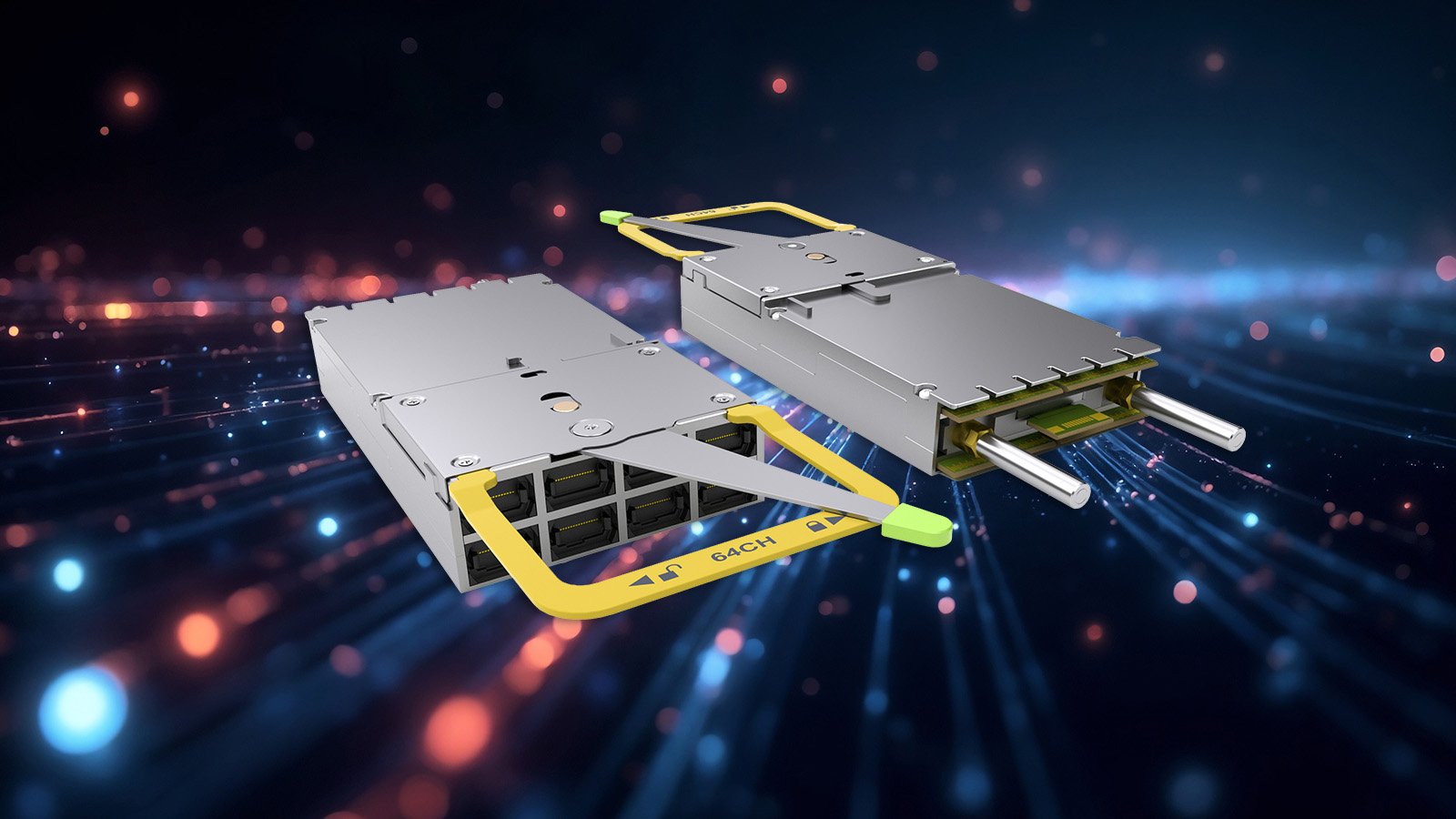

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...

3 min read

Douglas Gourlay

:

Nov 5, 2019 3:57:53 AM

Douglas Gourlay

:

Nov 5, 2019 3:57:53 AM

Right around the same time I joined Arista in 2009, Amazon Web Services developed the concept of the Virtual Private Cloud, one of the seminal technologies that became a core construct deployed throughout public clouds enabling enterprise customers to corral and protect resources and provision them into logical groups, align security policies, and simplify their management. Following this, Google developed a model for Virtual Private Clouds that spanned regions allocating one subnet per region by default - creating the first multi-region VPC within a single cloud provider.

In our endless quest at Arista to deliver an amazing operator experience we realized that while this was a great start and amazing technology we needed to continue this evolution and deliver a multi-region, multi-provider virtual private cloud capability that spans from the campus, to the data center, to AWS, Azure, and Google public clouds and even reaches down into the Kubernetes host - a true multi cloud and cloud native capability - delivered through Arista EOS®.

We obviously have named this version of EOS: CloudEOS™. It inherits all of the best capabilities of EOS - in fact, it is built off of the same source code, so it is completely feature, API, and code compatible with its progenitor. But there are some amazing new differences worth highlighting and discussing:

Cloud with Guard Rails

I used to hear people wanted to ‘run fast and break things’. Sure, this is fine if you’re hacking away on some web app for non-mission critical stuff. But we’re talking about networks here, things that power heart monitors in surgical theatres, connect up the physical security cameras at airports, and move trillions of dollars a day of transactions in financial exchanges. Here, in the network, things are different - we want to move fast, and not break things.

Our goal with CloudEOS is simple, we want to enable the DevOps teams to use the tools they are familiar with, add and scale and adjust their compute and storage and cloud service requirements in a rapid-fire and dynamic environment as fast as possible - we want to enable true business agility. We also want the networking team to have the confidence to know that the guardrails are in place - the CIDR block for the ERP system won’t get accidentally duplicated, that someone can’t connect an external test segment to a production data store in the enterprise, and that critical changes and deployments to production segments may require a code review in the network too before they are deployed into production.

See, when you’re on a racetrack going as fast as you can to win, guard rails help you go faster. You’re more confident that the track itself is helping you stay on it and with that confidence, you can push harder and go even faster.

So go on, take CloudEOS for a spin - it’s available in Azure and AWS today, and shortly in Google Cloud as well. We have clients with as few as 4-5 VPCs simplifying transit and we have massive scale-out customers with 500-1000 VPCs leveraging elastic scale up/down and dynamic path selection.

Also, worth checking out is this demo where the inestimable Fred Hsu takes us on a tour of CloudEOS at work to simplify, scale, and operate a multi-cloud and cloud-native infrastructure.

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...

Leaf-spine architectures have been widely deployed in the cloud, a model pioneered and popularized by Arista since 2008. Arista’s flagship 7800...

The explosive growth of generative AI and the demands of massive-scale cloud architectures have fundamentally redefined data center networking...