The Cognitive Campus Center Journey

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

2 min read

/Images%20(Marketing%20Only)/Blog/Jayshree_Ullal_024.png) Jayshree Ullal

:

Jul 27, 2010 9:30:47 AM

Jayshree Ullal

:

Jul 27, 2010 9:30:47 AM

As we enter the second half of 2010, I sense that an architectural shift in data center environments has begun replacing traditional constructs. Traffic Patterns are rapidly shifting from the traditional North-South client server model to an East-West server to server. As this shift occurs, it is even more important to assess the impact of application performance due to network latency. The nature of resources and applications are more dynamic and dependent upon workloads and user access which require a more vertical application focus in networking to handle application performance.

Two architectures have emerged to solve this. Arista 7100 cut through switches and Arista 7500/7048T series VOQ switches.

Dynamic Buffer Allocation for Symmetric Applications

For latency sensitive environments, “cut through” switching is deployed that is measured in the sub-microseconds, and offering the lowest latency with minimal buffers at a port level to guarantee real time traversal of information. This is an ideal architecture for “leaf” and low latency designs with symmetric traffic patterns. Occasionally, traffic patterns can occur which exhibit unique sub-microsecond short bursts commonly referred to as “Microbursts”. It is a sudden traffic load that may occur briefly and is transient in nature. Recently a lot of benchmarks are being artificially generated to illustrate the dangers of micro-burst almost creating unreal fan-out environments. Arista switches are designed to smoothly handle traffic loads quite well in real world with adequate buffering as well as a Dynamic Buffering Allocation (DBA) technique that allows ports to claim additional buffer capacity.

This is most applicable in high frequency trading and gaming applications that demand ultra low latency using cut-through switching techniques with the Arista 7100 series.

Large Buffers with VOQ for Asymmetric Loads:

For heavily loaded networks, such as “spine” applications, or seamless access of storage, compute and application resources enabling scale-out across networks with lossless performance larger buffers and a VOQ (Virtual Output Queuing) is key. Web applications demanding movement of large numbers of storage, map-reduce Hadoop clusters, search or database queries are the key target. Well designed store-and-forward switching is the optimal answer to proactively manage and prevent congestion.

Arista’s recent introduction of the Arista7500 series is a fitting example of this architecture. With unprecedented 384 wire speed L2/L3 10GE ports and the extended memory of 18+ Gigabytes of packet buffer in a single 11RU chassis, you can guarantee predictable access between all nodes. In such asymmetric cases, cycles cannot be wasted while an application waits for data to show up or indirectly by waiting for another application process and is still optimal at 4-14 microseconds latency.

Old Connectivity versus New Application Focus:

Old oversubscribed networks of the past were predicated on well-defined email (1MB) transfers treating networking as a connectivity of ports traversing North-South and latency of 100+ microseconds whereas the modern data center requires consistent performance based on more vertical application patterns with east-west traversals.

This is why Arista Networks has been suitably awarded Interop 2010’s #1 Best of Show for its flagship 7500 and also placed #1 in the Network World Test for the 7100 series this year beating out established vendors. I want to take this opportunity to thank Arista’s fans and customers for your steadfast recognition of our best of breed products as well as our architecture. As always I welcome your comments feedback@arista.com

References

Tutorial on Real World Microbursts

Switching Architectures for Cloud Networking - Whitepaper

EOS Feature Summary

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

/Images%20(Marketing%20Only)/Blog/Many-Facets-AI-Blog-Image.jpg)

As computational resources scale to meet the demands of large generative artificial intelligence (AI) models, networking plays a crucial role in...

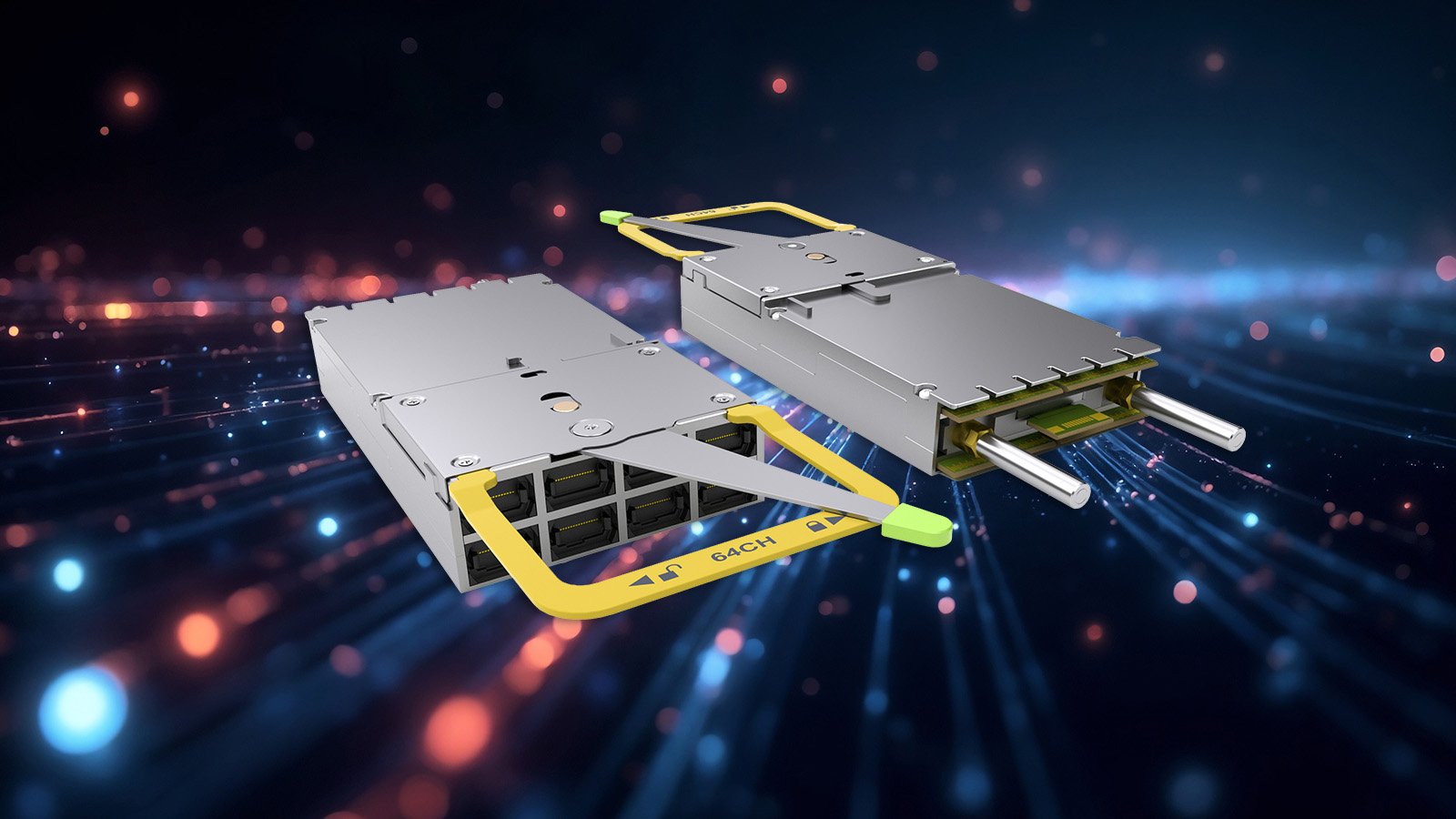

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...