The Cognitive Campus Center Journey

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

/JU-Open-AI-UEC-Blog.jpg)

In 1984, Sun was famous for declaring, “The Network is the Computer.” Forty years later we are seeing this cycle come true again with the advent of AI. The collective nature of AI training models relies on a lossless, highly-available network to seamlessly connect every GPU in the cluster to one another and enable peak performance. Networks also connect trained AI models to end users and other systems in the data center such as storage, allowing the system to become more than the sum of its parts. As a result, data centers are evolving into new AI Centers where the networks become the epicenter of AI management.

Trends in AI

To appreciate this let’s first look at the explosion of AI datasets. As the size of large language models (LLMs) increases for AI training, data parallelization becomes inevitable. The number of GPUs needed to train these larger models cannot keep up with the massive parameter count and the dataset size. AI parallelization, be it data, model, or pipeline, is only as effective as the network that interconnects the GPUs. GPUs must exchange and compute global gradients to adjust the model’s weights. To do so, the disparate components of the AI puzzle have to work cohesively as one single AI Center: GPUs, NICs, interconnecting accessories such as optics/cables, storage systems, and most importantly the network in the center of them all.

Today’s Network Silos

There are many reasons and causes of suboptimal performance in today’s AI-based data centers. First and foremost, AI networking demands consistent end-to-end Quality of Service for lossless transport. This means that the NICs in a server, as well as networking platforms, must have uniform markers/mappings and accurate controls and congestion notifications (PFC & ECN with DCQCN) as well as appropriate buffer utilization thresholds so each component can react to network events like congestion promptly, ensuring the sender can precisely control the traffic flow rate to avoid packet drops. Today, the NICs and networking devices are configured separately. Any configuration mismatch can be extremely difficult to debug in large AI networks.

A common reason for poor performance is component failures. Servers, GPUs, NICs, transceivers, cables, switches, and routers can fail resulting in go-back N - or even worse, can stall an entire job, which leads to huge performance penalties. And the probability of component failures becomes even more pronounced as the cluster size grows. Traditionally, GPU vendors’ collective communication libraries (CCLs) will try to discover the underlying network topology using localization techniques, but discrepancies between the discovered topology and the actual one can severely impact job completion times of AI training.

Another aspect of AI networks is that most operators have separate teams designing and managing distinct compute vs. network infrastructures. This involves the use of different orchestration systems for configuration, validation, monitoring, and upgrades. The lack of a single point of control and visibility makes it extremely difficult to identify and localize performance issues. All of these problems are exacerbated as the size of the AI cluster grows.

It’s easy to see how these silos can grow deeper to compound the problem. Split operations between compute vs. networking can lead to challenges linking the technologies together for optimum performance, and to delays in diagnosing and resolving performance degradation or outright failures. Networking itself can bifurcate into islands of InfiniBand HPC clusters distinct from Ethernet-based data centers. This, in turn, can limit investment protection, cause challenges in passing data between the islands, forcing the use of awkward gateways, and in linking compute to storage to end users. Focusing on any one technology (such as compute, for example) in isolation of all other aspects of the holistic solution ignores the interdependent and interconnected nature of the technologies as shown below.

/Images%20(Marketing%20Only)/Blog/AI-Blog-Art2.png?width=970&height=474&name=AI-Blog-Art2.png)

Rise of the New AI Center

The new AI Center recognizes and embraces the totality of this modern, interdependent ecosystem. The whole system rises together for optimum performance rather than foundering in isolation as with prior network silos. GPUs need an optimized, lossless network to complete AI training in the shortest time possible, and then those trained AI models need to connect to AI inference clusters to enable end users to query the model. Compute nodes, spanning both GPUs / AI accelerators and CPUs / general compute, need to communicate with and connect to storage systems as well as other IT existing systems in the existing data center. Nothing works alone. The network acts as connective tissue to spark all of those points of interaction, much as a nervous system provides pathways between neurons in humans.

The value in each is the collective outcome enabled by the total system linked together as one, not in the individual components acting alone. For people, the value comes from the thoughts and actions enabled by the nervous system, not the neurons alone. Similarly, the value of an AI Center is the output consumed by end users solving problems with AI, enabled by training clusters linked to inference clusters linked to storage and other IT systems, integrated into a lossless network as the central nervous system. The AI Center shines by eliminating silos to enable coordinated performance tuning, troubleshooting, and operations, with the central network playing a pivotal role to create and power the linked system.

/Images%20(Marketing%20Only)/Blog/JU-Blog-AI-Center.png?width=970&height=467&name=JU-Blog-AI-Center.png)

Arista EOS Powers AI Centers

EOSⓇ is Arista's best-in-class operating system that powers the world's largest scale-out AI networks, bringing together all parts of the ecosystem to create the new AI Center. If a network is the nervous system of the AI Center, then EOS is the brain driving the nervous system.

A new innovation from Arista, built into EOS, further extends the interconnected concept of the AI Center by more closely linking the network to connected hosts as a holistic system. EOS extends the network-wide control, telemetry, and lossless QoS characteristics from network switches down to a remote EOS agent running on NICs in directly attached servers/GPUs. The remote agent deployed on the AI NIC/ server transforms the switch to become the epicenter of the AI network to configure, monitor and debug problems on the AI Hosts and GPUs. This allows a singular and uniform point of control and visibility. Leveraging the remote agent, configuration consistency including end-to-end traffic tuning can be ensured as a single homogenous entity. Arista EOS enables AI Center communication for instantaneous tracking and reporting of host and network behaviors. This way failures may be isolated for communication between EOS running in the network and the remote agent on the host. This means that EOS can directly report the network topology, centralizing the topology discovery and leveraging familiar Arista EOS configuration and management constructs across all Arista Etherlink™ platforms and partners.

Rich ecosystem of partners including AMD, Broadcom, Intel and NVIDIA

With a goal of building robust, hyperscale AI networks that have the lowest job completion times, Arista AI Centers is coalescing the entire ecosystem in the new AI Center of network switches, NICs, transceivers, cables, GPUs, and servers to be configured, managed, and monitored as a single unit. This reduces TCO and improves productivity across compute or network domains. The vision of AI Center is a first step in enabling open, cohesive interoperability and manageability between the AI network and the hosts. We are staying true to our commitment of open standards with Arista EOS, leveraging OpenConfig to enable AI centers.

We are proud to partner with our esteemed colleagues to make this possible.

Welcome to the new open world of AI Centers!

For decades, the industry had accepted a status quo plagued by fragile, overly complex, disparate operating systems, rigid hardware controllers,...

/Images%20(Marketing%20Only)/Blog/Many-Facets-AI-Blog-Image.jpg)

As computational resources scale to meet the demands of large generative artificial intelligence (AI) models, networking plays a crucial role in...

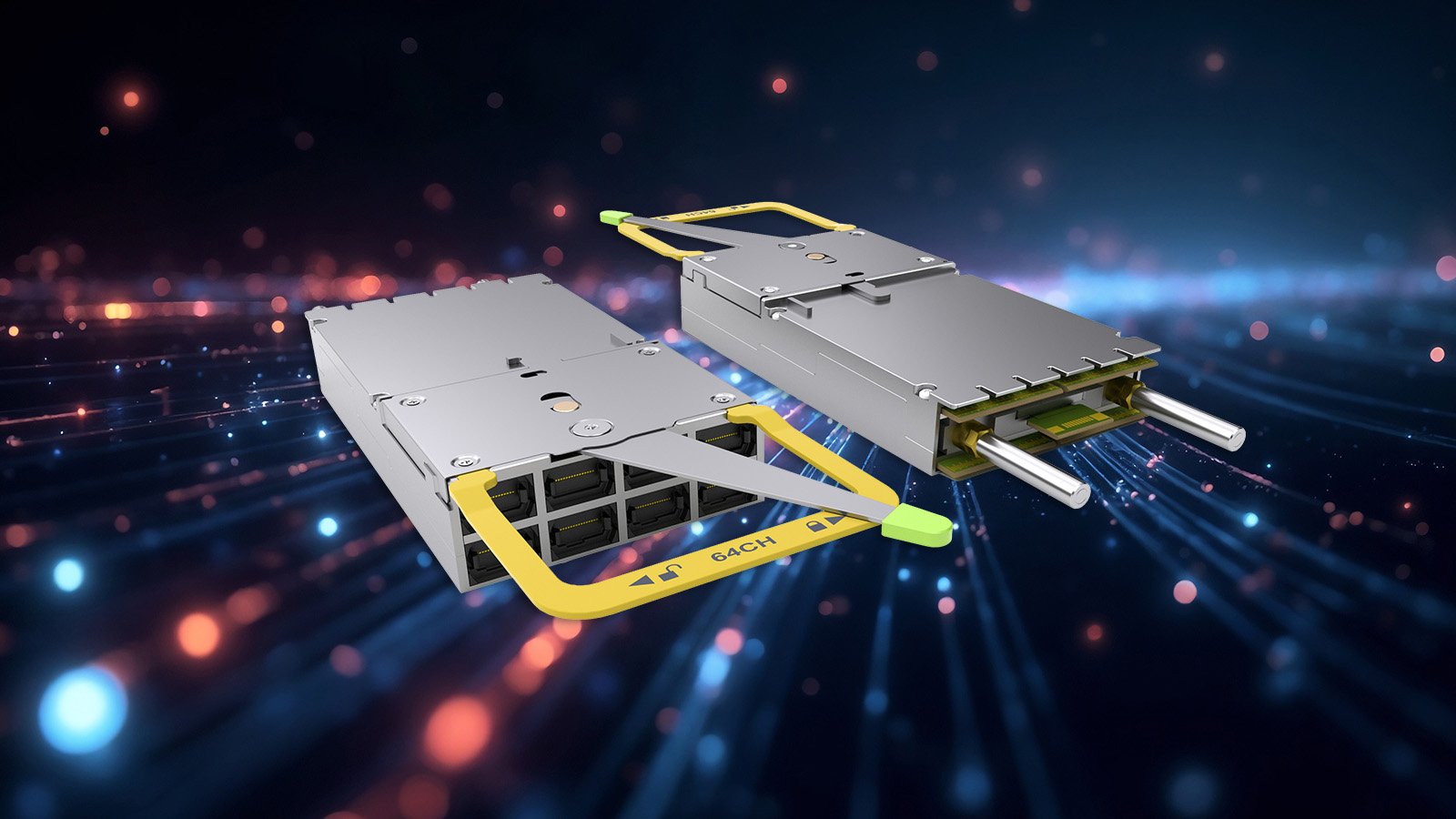

In 2016, Arista Networks together with powerful industry leaders, announced the OSFP (Octal Small Form-Factor Pluggable) specification and...